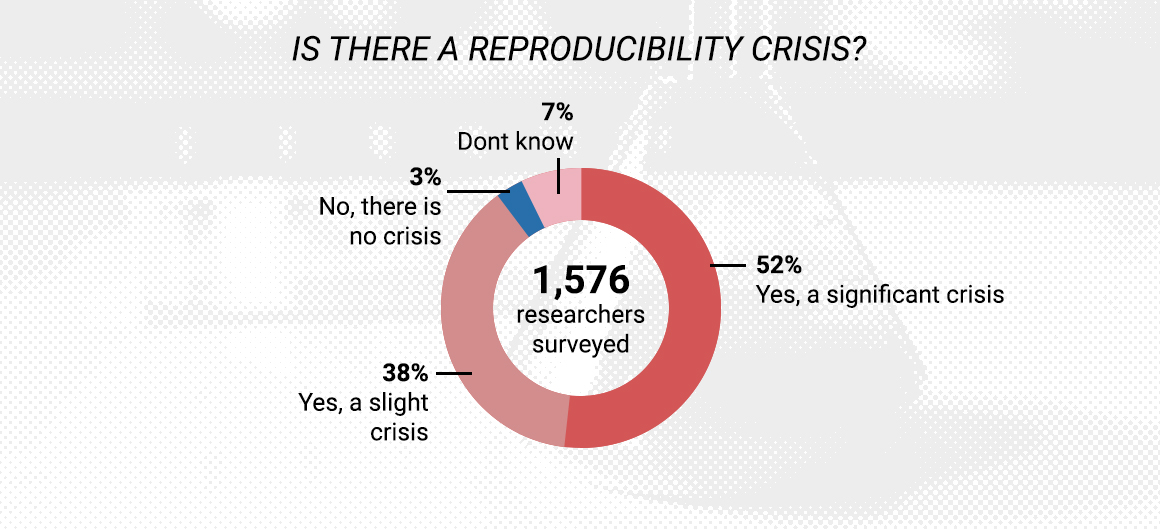

Is There a Reproducibility Crisis?

In 2016, Monya Baker carried out an impactful survey of 1,500 scientists asking them if they had ever had trouble reproducing data.

More than 70% of researchers reported a reproducibility crisis after failing to reproduce another scientist’s experiments, and more than half had failed to reproduce their own experiments.

Since this Nature article, the narrative of the reproducibility crisis has been extensive in both scientific literature and the public domain.

Whilst the scientific community is beginning to galvanise on this debate, and the extent and impact is being investigated with a new area of research, meta research, all articles to date do admit to some inaccuracies of reported data.

In 2019, Stefan Guttinger looked at the basic taxonomy of scientific failure, recognising that failure to reproduce an experimental outcome is due to either failure of theorising, namely the existing model is incorrect, which can lead to over-generalisation, or there is an inability to reproduce a previously observed event.

This latter situation can be due to over-generalisation as well as methodological failures, e.g. poor reagents, experimental design, poor technique, or in those very rare occasions, fraud.

Mycoplasma testing

Whilst the tools are readily available for researchers to minimise methodological failures, for example Mycoplasma testing and cell line authentication testing, the rigorous checkpoints are not really being enforced as a community, with journals and funding agencies apprehensive to tackle the elephant in the room.

The International Journal of Cancer is however an exception, and in 2017 Fusenig et al. outlined the challenging process they had undertaken to ensure journal submissions were accompanied with cell authentication data.

Alongside, an apathy amongst these scientific gate keepers, there is also evidence that scientists are not taking the initiative themselves, for example in 2018, Shannon reported another questionnaire looking at ~198 Australian researchers, of whom 54% had not tested any lines for Mycoplasma that past year, and 82% had not carried out any cell line authentication.

Take for example Mycoplasma, it is well known that these mollicutes compete with their host cells for nutrients and biosynthetic precursors and can alter DNA, RNA and protein synthesis, diminish amino acid and ATP levels, introduce chromosomal alterations, and modify host-cell plasma membrane antigens.

However, in 2015, Olalerin-George et al. looked at the prevalence of Mycoplasma contamination in the NCI RNA-Seq archive, which at a minimum was around 11% contamination rate, across 9395 rat and human samples. What was interesting, these samples have been published in some of the most prestigious papers and have subsequently been generally well cited with two of the series having over 500 citations.

Crisis?

Whether or not this is a ‘crisis’, as contemplated by Fanellia in PNAS (2018), it is certainly something that we need to work as a community to improve, particularly when politicians are using the reproducibility crisis to denounce the legitimacy of scientific data, for example, climate change.

Whilst we at Cryoniss will not be able to rectify this global challenge on our own, we can work with the community and scientists to improve the quality of data at the bench.

As such, we have partnered with some of the top scientists in the fields of reagent quality alongside Perfectus Biomed, to provide our customers with access to the latest qualified scientific data to ensure the validity of their research.

As Christopher Korch suggested to me at the ISBIOTECH conference, if every laboratory, department or company had its own Reagent Quality Policy, that would be a great start. For something so simple, why not pull one together?